Tags

AI, artificial-intelligence, code, generative, LLM, Technology

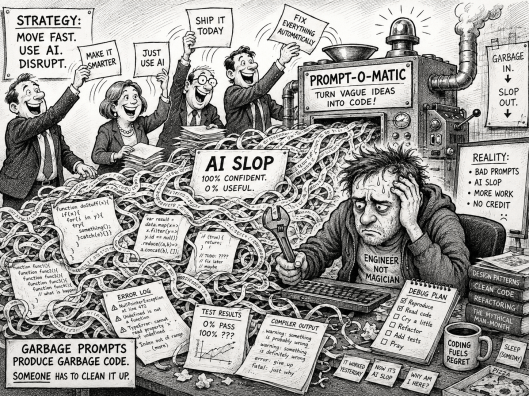

There are a lot of posts on various platforms about how AI is generating ‘slop’, and it is actually costing a lot of time to take what was generated and put it right. Cleaning up after AI is something people are even incorporating into their online biographies. On the other hand, others indicate they’re getting good results. So what is the reality? I think what we’re seeing is a combination of factors, but there is a healthy dose of human behaviour amplifying the issue.

There is no getting around how well a publicly available foundation AI can perform, depending on the availability of content for training. Providing simple Python logic, which has been asked for with clear precision and expressed clearly, is likely to yield positive results – that’s simply a function of the amount of accessible content on the web. If you asked an AI to generate formal method notations like Z or VDM – good luck.

But when we see what, on the surface, looks good, it is easy to be taken in and start to trust the LLM. Combine that with several other factors:

- Humans, by our nature, will tend to minimise effort (or, if you want to be crass with language, lazy). You can see this through things like UX design principles that advocate avoiding ‘cognitive load‘ and ‘choice overload’ (the idea that we can only cope with so much information in working memory, going beyond that, we are more likely to make mistakes or need to apply more cognitive effort), through to George Kingsley Zipf’s Human Behavior and the Principle of Least Effort.

- When we hand a task to an AI, the way LLMs work means that it will seek to provide an answer, rather than say sorry, can’t get an answer (if we did that, then the principle of least effort would explain why we’d give up with it). So we’re going to get a result, whereas the non-LLM route means we won’t see its incorrect until we’ve finished. When it comes to coding, the LLM is unlikely to make the coding errors we can make as humans, but the code it produces may not be as elegant or efficient, may not address all the edge cases, and may even miss the problem we want to solve. But the outcome will be executable.

- Next issue is the quality of prompting: as humans, we have (generally) good long-term memory, and even if we forget specifics, we build a strong contextual understanding that we use. But the LLM doesn’t have this; it doesn’t know whether we’re trying an idea out or writing code that needs to be bombproof and extremely scalable. We have to define that very explicitly. If we’re working with a new or junior developer, we understand that we shouldn’t make these assumptions and will seek verbal and nonverbal feedback if there are issues with clarity or expectations.

- When building things manually, each step we take to create the solution (whether that’s code or a PowerPoint) is slower, and we have more time to evaluate what we want to do, how we want to do it, and why we want to do things a particular way. In some respects, the LLM approach is like code reviewing. For a proper review, the reviewer is going to walk through each line of code and evaluate it – a process that can be lengthy. But under time pressure, what often happens is we’ll take a 1st fast pass to look for the ‘bad smells’ and pick up on any obvious issues. Then zero in on the bad smells, or at least the worst ones, and look more closely. But the time pressure, knowing the tooling we have to help eliminate issues, and the mental effort to quickly understand a lot of code take their toll. This, to varying degrees, is exactly what happens with LLM artefacts, except that the rate at which code can be properly reviewed is now a real issue relative to the rate of generation.

- Commerce has always been about either innovating to compete or doing things more quickly and cheaply. That pressure has grown as technology has advanced, creating the potential to do more. As a result, it is not surprising to see that pressure results in code being generated, even if that is likely to drive an unwitting accumulation of technical debt that will bite in the years to come. Furthermore, recognising poor-quality code takes experience. Which means expensive engineers. It is possible to appreciate that a non-technical person can generate a basic desktop utility using ChatGPT.

As you can see, there are plenty of things we, as humans, can do to mitigate ‘AI slop’ and get AI delivering value, quality, and velocity.

Conclusion

The bottom line here is an issue of expectation. We wouldn’t be so harsh as to ask someone with dyscalculia to produce a company’s accounts using only a pencil and paper. Another way to look at it, you’d not ask an unqualified accountant to do your tax return. But that is often what is happening, Someone with dyscalculia could easily ‘hallucinate’ the numbers. An unqualified person is not going to know all the rules needed to complete a tax return well enough to minimise tax exposure.

to err is human; to persist in error is diabolical

Saint Augustine

It is human to make mistakes, and poorly directing (or training) an LLM is certainly an error. But we know this is possible, so we should consider what the code is for and take appropriate steps to mitigate it, working to improve the way prompts are given and context is provided to enable better outcomes.

What is clear to me is that ‘AI slop’ isn’t going away soon, and that, as engineers, we have to get better at promoting to get the best code we can out of an LLM. While it would be nice to think that the industry will realise that LLMs are not a panacea, and you still need those expensive engineers to prompt an LLM so that they don’t generate unnecessary reams of low-grade, brittle code.

The question really has to be, who is going to build an LLM model and agents that can pre-screen code and call out AI ‘slop’, saving code reviewers (particularly those who are looking after the open-source solutions on which so many of us depend). If Anthropic’s Mythos finds 20-year-old bugs, we should be able to help protect open-source projects from low-quality, poorly prompted AI-generated code.

You must be logged in to post a comment.