A couple of years ago I got to discuss some of the design ideas behind API Platform Cloud Service. One of the points we discussed was how API Platform CS kept the configuration of APIs entirely within the platform, which meant some version management tasks couldn’t be applied like any other code. Whilst we’ve solved that problem (and you can see the various tools for this here API Platform CS tools). The argument made that your API policies are pretty important, if they get into the public domain then people can better understand to go about attacking your APIs and possibly infer more.

Move on a couple of years, Oracle’s 2nd generation cloud is established an maturing rapidly (OCI) and the organisational changes within Oracle mean PaaS was aligned to SaaS (Oracle Integration Cloud, Visual Builder CS as examples) or more cloud native IaaS. The gateway which had a strong foot in both camps eventually became aligned to IaaS (note that this doesn’t mean that the latest evolution of the API platform (Oracle Infrastructure API) will lose its cloud agnostic capabilities, as this is one of unique values of the solution, but over time the underpinnings can be expected to evolve).

Any service that has elements of infrastructure associated with it has been mandated to use Terraform as the foundation for definition and configuration. The Terraform mandate is good, we have some consistency across products with something that is becoming a defacto standard. However, by adopting the Terraform approach does mean all of our API configurations are held outside the product, raising the security risk of policy configuration is not hidden away, but conversely configuration management is a lot easier.

This has had me wondering for a long time, with the use of Terraform how do we mitigate the risks that API CS’s approach was trying to secure? But ultimately the fundamental question of security vs standardisation.

Mitigation’s

Any security expert will tell you the best security is layered, so if one layer is found to be vulnerable, then as long as the next layer is different then you’re not immediately compromised.

What this tells us is, we should look for ways to mitigate or create additional layers of security to protect the security of the API configuration. These principles probably need to extend to all Terraform files, after all it not only identifies security of not just OCI API, but also WAF, networks that are public and how they connect to private subnets (this isn’t an issue unique to Oracle, its equally true for AWS and Azure). Some mitigation actions worth considering:

- Consider using a repository that can’t be accidentally exposed to the net – configuration errors is the OWASP Top 10. So let’s avoid the mistake if possible. If this isn’t an option, then consider how to mitigate, for example …

- Strong restrictions on who can set or change visibility/access to the repo

- Configure a simple regular check that looks to see if your repos have been accidentally made publicly visible. The more frequent the the check the smaller the potential exposure window

- Make sure the Terraform configurations doesn’t contain any hard coded credentials, there are tools that can help spot this kind of error, so use them. Tools exist to allow for the scanning of such errors.

- Think about access control to the repository. It is well known that a lot of security breaches start within an organisation.

- Terraform supports the ability to segment up and inject configuration elements, using this will allow you to reuse configuration pieces, but could also be used to minimize the impact of a breach.

- Of course he odds are you’re going to integrate the Terraform into a CI/CD pipeline at some stage, so make sure credentials into the Terraform repo are also secure, otherwise you’ve undone your previous security steps.

- Minimize breach windows through credentials tokens and certificate hanging. If you use Let’s Encrypt (automated certificate issuing solution supported by the Linux Foundation). Then 90 day certificates isn’t new.

Paranoid?

This may sound a touch paranoid, but as the joke goes….

Just because I’m paranoid, it doesn’t mean they’re not out to get me

Fundamental Security vs Standardisation?

As it goes the standardisation is actually a dimension of security. (This article illustrates the point and you can find many more). The premise is, what can be ensured as the most secure environment, one that is consistent using standards (defacto or formal) or one that is non standard and hard to understand?

That consistency and predictability are important not just for code if you look at any

That consistency and predictability are important not just for code if you look at any

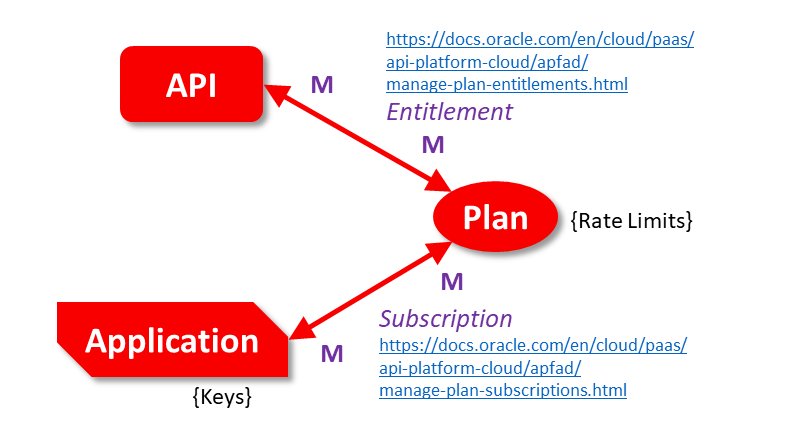

There are circumstances in which notifications from the Oracle API Platform CS could be seen as desirable. For example, if you wish to ensure that the developers are defining good APIs and not accidentally implementing APIs that hit the

There are circumstances in which notifications from the Oracle API Platform CS could be seen as desirable. For example, if you wish to ensure that the developers are defining good APIs and not accidentally implementing APIs that hit the

The Oracle API Platform adopted an intelligent pricing model by basing costs on API call volumes and Logical gateway node groupings per hour. In our book about the

The Oracle API Platform adopted an intelligent pricing model by basing costs on API call volumes and Logical gateway node groupings per hour. In our book about the  I’ve started to subscribe to the

I’ve started to subscribe to the  Ensuring the API has mitigation’s against the classic

Ensuring the API has mitigation’s against the classic

You must be logged in to post a comment.