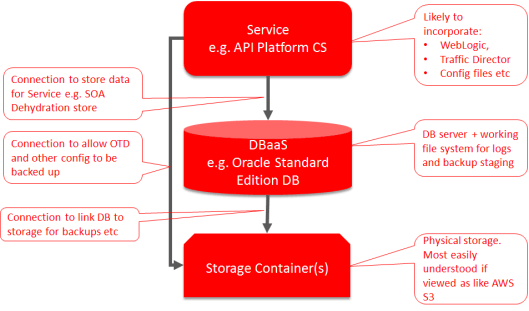

Oracle Cloud is growing and maturing at a tremendous rate if the breadth of PaaS capabilities is any indication. However, there are a few gotchas out there, that can cause some headaches if they get you. These typically relate to processes that impact across different functional areas. A common middleware stack (API CS, SOA CS, OIC etc) will look something like the following:

As the diagram shows when you build the cloud services, the layers get configured with credentials to the lower layers needed (although Oracle have in the pipeline the Oracle managed version of many services where this is probably going to be hidden from us).when comes credentials Oracle (rightly so) reminds you that your password should be changed on a regular basis. What is not so clear is that this has an impact on the linkages between the layers.

Once you have changed you password, if you created and used your credentials to authenticate between layers, if you don’t then correct the details on each of the layers then things will start breaking. Whilst the service to database is obvious and relatively easy to remember, after all these services are more than likely on your dashboard as well. But, because storage isn’t by default shown on the dashboard it becomes very easy to over look.

The impact of this can be subtle at first, as the DBaaS appears to run its DB storage within the VM it will continue to run without issue. If you have the DB configured to backup automatically, the backups will kind of work. I say kind of, because what happens is that the DB writes to a file partition on the DBaaS VM, once the backup completes, these files get transferred to Storage. But, now the credentials are impacted this fails, but the cloud doesn’t automatically alert you to the fact, so unless you check regularly backups could fail for a longtime. You only see trouble when the file partition becomes full. This also impacts any DB working space. Net result, is sooner or later the DB can’t handle queries from the application and everything grinds to a stop.

Unpicking the problems can be fiddly, essentially two things need to be done…

- Update the wallet file so the DB can start using the storage

- Flush out the backup files – either by deleting them once you have signed into the DB VM using SSH.

There is documentation to support the resolution …

or an alternative approach (this requires SSL sign in) at …

The password correction process can be done by using REST APIs. When executing the REST call we experienced some issues using CURL, but found using Postman both easier to configure the values, but it presented the result more clearly in terms of success and failure (but if it fails then look at the raw result panel).

With a DB revived and the passwords fixed it will happily report it can backup when triggered manually or by the automatic schedule. But then the service layer also has a backup point in the UI. So if, or when runs it copies parts of the file system to storage it still fails. This is because it has its own direct connection to the storage. Until you start looking very carefully at the messages the whole thing can be very disconcerting. You have one view saying backups work, but another that says no they don’t.

So remember the service needs to have the storage credentials refreshed. This may vary between the different services (JCS, SOA CS etc). The following documentation is known to work for the API Platform CS …

The Good News …

The nearly good news is that, this is not an uncommon support issue. So if you get suckered then it is possible to re-mediate BUT because Oracle don’t want your credentials it does mean that you get given docs to follow and you have to run them yourself.

The really good news is that rather than just letting people get caught out, they are working on automating the cascade of credentials changes. But this isn’t going to happen over night as it effectively means each product will need APIs to allow the credentials to be modified, if they don’t already have them. Then, if each product has a slightly different API approach for this requirement then it is going to take time to extend the cloud management tier to examine what services you have, the dependency chain(s) could become complex – particularly if a DB is shared etc, not to mention dealing with different flavours of API for this task.

Pingback: PaaS (Process & Integration) Partner Community Newsletter June 2018 | SOA Community Blog

Pingback: Lessons in Oracle Cloud Password Management by Phil Wilkins | PaaS Community Blog