There has been a rapidly growing series of articles being written about the limited launch of Mythos, a new LLM. The evolution of models has helped quickly advance AI-assisted software development. But the capabilities of Mythos and Project Glasswing that really grabbed attention and concern.

Glasswing is an initiative that allows major partner software and service vendors to access the Mythos model. This is because Mythos has made significant advances in identifying software vulnerabilities and generating exploits for them. This has been illustrated by Anthropic’s Red team – which found bugs in OpenBSD (OS) that have evaded detection for as much as 27 years. While the BSD family of operating systems isn’t as pervasive as Linux, they both share a similar open ethos and a sufficient community to keep them active and maintained. The underlying message here is that we can find and exploit such vulnerabilities, and there are certainly opportunities to do so elsewhere, in software that can affect a great many more users, such as Firefox.

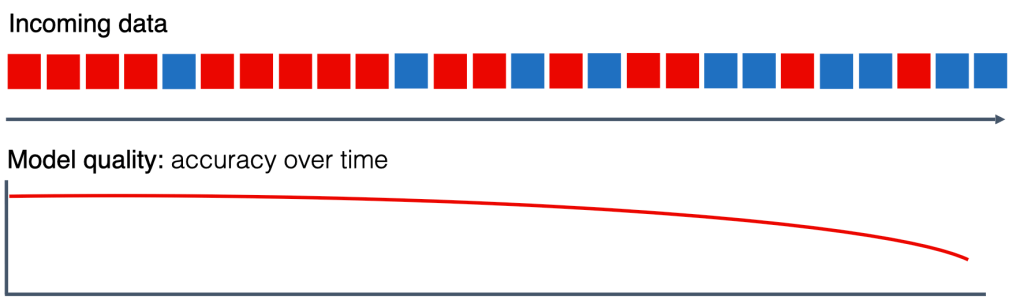

Having key software vendors, such as OS and browser vendors, get access is certainly a positive step, but it doesn’t address a key consideration. Applying code fixes and releasing updates does not, by itself, equate to being safer. The true challenge is for end users and organisations to recognise the need to roll out updates quickly. This is where the source of true concern should be. The concerns …

- Organisations don’t always release patches as soon as they’re available. There is an element of testing to ensure no adverse impact on each organisation’s setup. Even with simple browser changes, something affects the app’s behaviour.

- Change represents risk, and organisations that experience issues during rollouts become increasingly risk-averse. Ironically, this is counterintuitive, but a very human reaction.

- Vendors’ patching tends to prioritise the latest versions of products, which can create dependency challenges. Bringing software up to date can result in a growing infrastructure footprint (more storage, memory and CPU needed – vendors add capabilities and features to compete and meet customer feature needs, driving continuous growth). That can really add costs, particularly in highly distributed use cases, such as user desktops and IoT devices. Addressing the accumulation of patches means devices no longer have the capability to properly service the new footprint. Consider this: why do people replace smartphones? Sometimes it’s hardware features like a better camera, but often it’s simply not enough storage or not being able to run all the apps, photos, etc.

- .Digging into some of the details from the Red Team shows that the LLM usage costs to uncover the vulnerabilities run from $50 – $20,000. This could have ramifications for smaller, more specialised software solutions where the cost of regularly rerunning the analysis outstrips potential revenue. As a result, we could suddenly see software product prices climb, or companies simply stop producing products we depend on. This may also see bad actors wanting to more quickly recoup the cost by accelerating the use of new exploits, in other words, more attacks, coming more quickly. Such considerations will create more pressure on the speed of patch cycles.

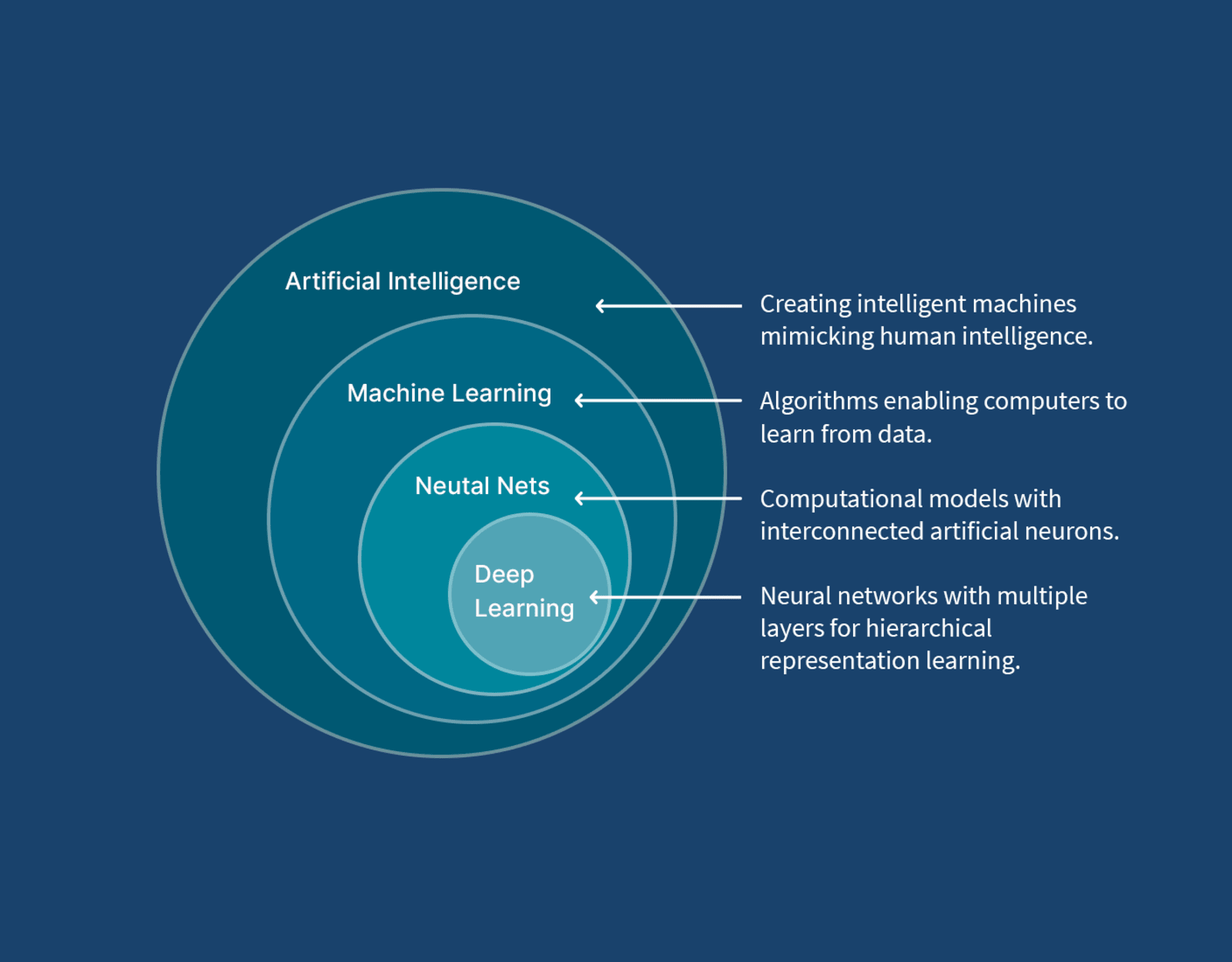

- This level of capability suggests that we really do need to ensure people shift from boundary-style security to security at every layer of our solutions. That’s not just simply authentication, but code being defensive, validating data values it gets given and os on.

All of this means we have to change mindsets from just enough, or simply putting a front-line security layer in place, to embedding. As end users, we must start to adopt several behaviours:

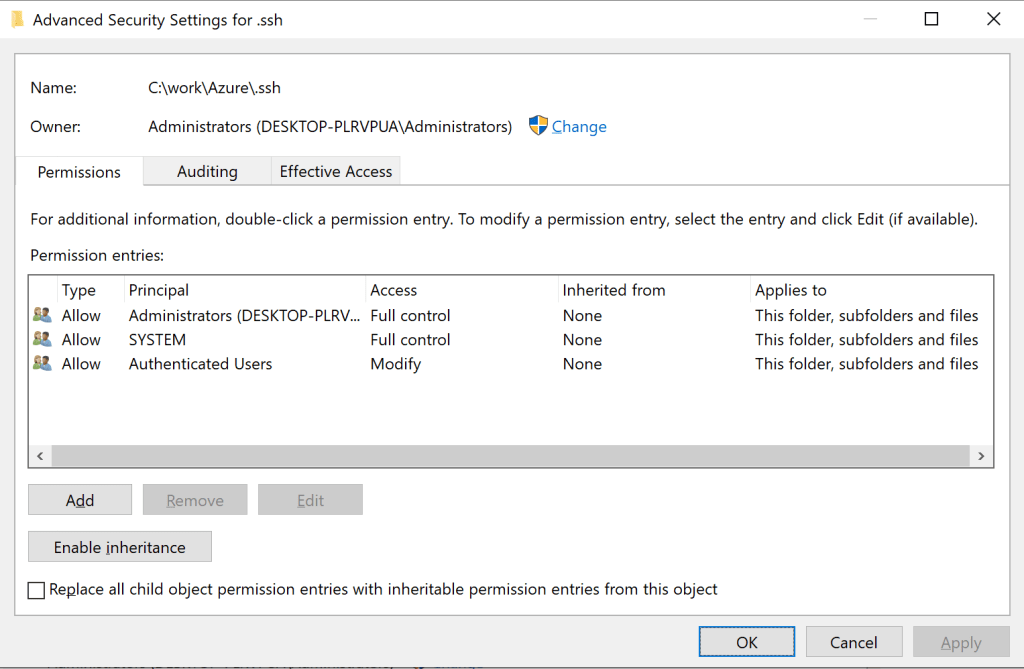

- Security conscious with our own devices – keeping software up to date and patched. I would consider my family to be above average when it comes to tech savvy, but even I am having to go in and run Windows updates on laptops, for example.

- Start voting with our feet – many of the services we use are largely or entirely software-powered (banks, energy providers), if those providers show signs of not taking security seriously enough, time to go elsewhere before we become victims.

Keeping up

One observation that the Mythos and Project Glasswing reporting is that the advancements are significant step changes, not incremental advancements (for example, Antghropic’s Sonnet 4.6 was only released a couple of months ago, and didn’t score highly for creating exploits – although better at detection). This suggests a couple of things …

- IT law has always played a game of catch-up, but if the advancements are going to be this large and this frequent, we have to start legislating against hypotheticals and allowing legal precedents to produce fine detail interpretations.

- We may have to consider big-brother observation of AI use, mitigated by strong transparency rules governing the handling of findings.

- Is the idea that we need to start looking at incorporating something like Asimov’s 3 Laws of Robotics into LLMs now looking far-fetched?

- Do we need to start thinking about mitigating the risk of deep exploits by bringing back the possibility that systems must be air-gapped?

Hyperbole?

It would be easy to put this down to hyperbole, or wanting to be a click-baity, but this is gaining a lot of high-profile attention, just consider these examples:

- What Is Claude Mythos—And Why Anthropic Won’t Let Anyone Use It (Forbes)

- Anthropic’s new AI tool has implications for us all – whether we can use it or not (The Guardian UK)

- Mythos autonomously exploited vulnerabilities that survived 27 years of human review. Security teams need a new detection playbook (Venture Beat)

- Anthropic’s Mythos Will Force a Cybersecurity Reckoning—Just Not the One You Think (Wired)

You must be logged in to post a comment.